Cloud Cost Optimization Manage and autoscale your K8s cluster for savings of 50% and more.If (number of running tasks = number of messages, but these running tasks are already busy processing messages, these tasks will not end up taking one of these messages out of the queue and we may end up with messages that have to wait a very long time to be processed. So something needs to spawn more containers when traffic increases. However this suffers from the problem that it would not auto-scale as the number of messages in the queue increases. We could set the minimum number of tasks we want running to be a number > 0 to ensure all messages are processed at some point. In this case, there would be no cold start assuming a container is up spinning for messages. We remove the lambda from the flow and just have SQS -> ECS rather than SQS -> Lambda -> ECS. task finishes, and container can be brought down

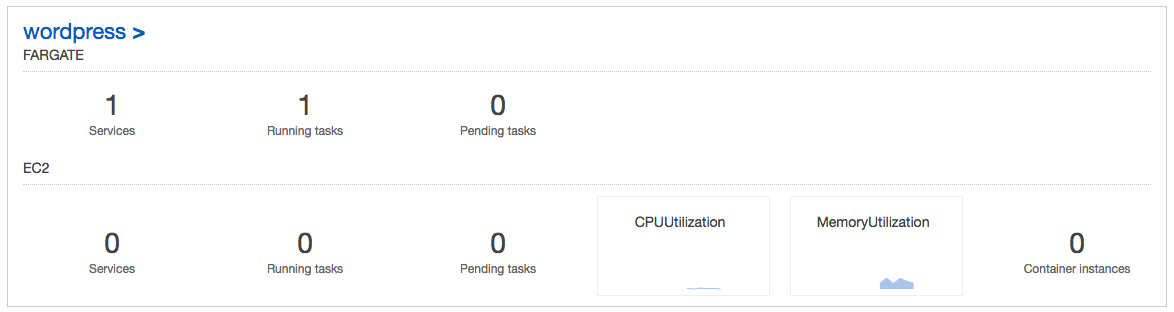

In this updated flow we'd create an ECS task that looks like the following: I've been toying with some ideas for updating this flow. Additionally, the cost of constantly running container or two would not be too problematic. This latency is directly visible to the user and is particularly unacceptable if the users job is not even particularly large yet they still wait for minutes for the container to boot up. This has the problem that a new ECS task is started with each request, so a new container must be booted up which can take minutes. What we currently do when a user submits a job request is send a message with a job id/reference to an SQS queue, have AWS lambda poll that queue and upon receiving a message, lambda starts an ECS task (SQS -> Lambda -> ECS). We want jobs to be as independent from one another as possible so we chose to run each job in its own container in Amazon ECS. We don’t want to limit the size of the job these users are submitting so this job can can in theory run for a very long period of time and require a large amount of memory (this rules out AWS Lambda as a compute engine option). select a large number of items and ask to update them all in some way). Here you don't have a LB though.you are doing that all in Lambda code since assumption is you only have 1 task running.įor a website I’m developing on AWS, a user can submit a large job (ex. You use the scaling rules to shut it down as traffic went away (typically minutes of low activity). What you do here is have the call initially come into a Lambda function that will START UP your Fargate service. This is tricker - and assumes that the caller will retry after 30s timeout.

This does require you to port your app though which can be difficult. The startup of a lambda 'container' is very fast and if you get only a few calls a day it will cost pennies. This means you only get charged for calls coming through. : A dedicated ALB + Fargate will always have a provisioned capacity and can be overkill for rarely called code.ġ) Port your app to Lambda and use API Gateway : Cost Savings is driver and traffic is randomly/low volume called via sync HTTP/S calls. You have several options here though depending on how known interval traffic you have and whether you can prepare in advance. To be fair, it typically takes 15-30s for a container task to spin up so the fact you have it behind an active Load Balancer would give a fairly negative experience to callers for very infrequent tasks since they would timeout. If you choose to use AWS Fargate with ALB, as you mention, you will never get it to turn off.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed